Why Computers Only Have Two Fingers

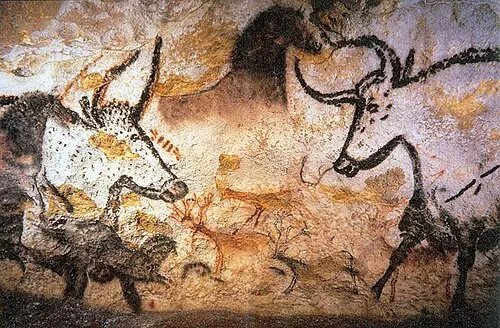

Since ancient times, humans have sought to experience the world around them and preserve the knowledge they discovered. Carving hunting scenes on cave walls or recording words on papyrus to pass on wisdom were all part of that effort. This is one of the most fundamental aspects of being human: understanding the world and communicating with others.

In particular, there have been longstanding attempts to capture continuously changing natural phenomena like sound and light as they are. For example, a vinyl record etches a singer’s voice or the subtle vibrations of an instrument into the continuous grooves on its surface. Methods that represent real-world information while preserving its continuous nature are called analog.

Yet the computer, a cornerstone of modern civilization, took an entirely different path for handling information. It chose the digital approach: the idea of representing real-world information using only a few clearly distinguishable values.

How can such simplicity capture the analog real world? Why did computers adopt this approach? Let’s see how just two digits, 0 and 1, can represent sound, text, images, and everything we can imagine.

Computers That Slice the World

Digital is a method of dividing the world’s information into small pieces and expressing each one as a simple number. Each of these pieces becomes the most basic unit of information a computer can understand.

Take photographs as an example. When we shoot with a film camera, light records the image on the film surface as a continuous variation of tone and brightness. This is fully analog information.

But a digital camera first divides the image into tiny square dots called pixels. Each pixel converts the intensity and color of light detected in its small region into a single numerical value and stores it. In the end, the subject is transformed into countless pixel values, a vast set of digital data.

So why does the computer insist on slicing the world into digital pieces?

Because of clarity of information and resilience against external interference. Analog signals are continuous, making them sensitive to the slightest change; even minor ambient noise or electrical interference can blur or distort the original. Digital, however, represents information as clearly distinct states. This means that even with some interference, the computer can still recognize the original value, keeping the information far more stable.

Information expressed this clearly can be handled without loss. If you photocopy a page of text or a drawing, then photocopy the copy, and repeat this just a few times, the content gradually becomes blurry and smeared until the original is barely recognizable. Digital information, on the other hand, can be copied millions of times or transmitted to the other side of the globe while remaining perfectly identical to the original.

But ternary (using 0, 1, 2) or quaternary (using 0, 1, 2, 3) systems are also digital, so why did computers adopt binary, which uses only two states, as their core principle?

Computers Have Two Fingers

Humans have ten fingers and use the decimal system, so you could think of a computer using binary as having two fingers. There is a very practical hardware engineering reason why computers use binary.

Computers run on electricity, and electrical signals carry more inherent variability than you might expect. Signals weaken as they travel through wires, components degrade over time and underperform, and heat generated during operation causes signals to fluctuate. Because of this signal degradation, dividing the signal into many levels would make it easy to mistake one level for another.

The most reliable way to distinguish information amid this instability is to use only two states: clearly high (1) or clearly low (0). Even if the signal weakens somewhat or picks up a bit of noise, as long as it is high enough to be read as 1 or low enough to be read as 0, the computer can correctly interpret the information. Binary is far more forgiving of small signal variations, which is what makes it possible to build stable computers.

The received signal is read as the nearest level. Green = correct read, Red = error.

In the end, the reason computers use binary was the most practical choice by engineers to build reliable machines from the imperfect electrical signals of the real world.

Bits

We mentioned that computers represent information using two states: 0 and 1. Each individual 0 or 1, the smallest unit of information a computer handles, is called a bit. Every sophisticated task we perform on a computer ultimately comes down to manipulating these tiny bits.

In everyday life, you may be more familiar with the unit byte, as in megabytes or gigabytes. One byte consists of 8 bits.

Wrap-up

We started from the most fundamental way computers perceive the world, the difference between analog and digital, and explored the practical reasons why computers chose binary, the system of 0s and 1s.

In the next post, we will look at how these bits can be used to make logical decisions, and how that logic is implemented in actual circuits.